Division of Science, NAOJ

De-noising cosmic mass density maps with deep learning

Gravitational lensing is a relativistic effect that causes characteristic distortion of the images of distant astrophysical sources. Although the induced distortion is tiny for individual sources, averaging over the shapes of many sources enables us to map the large-scale cosmic matter distribution. Wide-field galaxy surveys allow us to generate the so-called weak lensing maps, but actual observations suffer from noise due to the imperfect measurement of galaxy shape distortions and to the limited number density of the source galaxies.

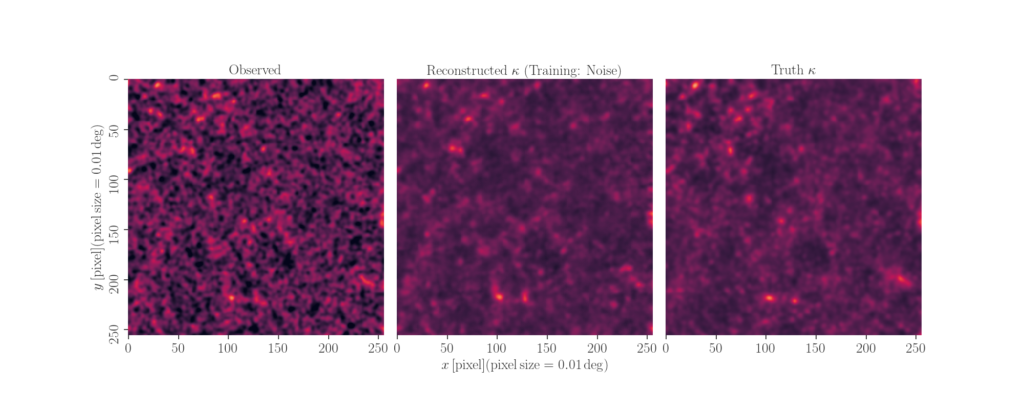

Dr. Masato Shirasaki (Division of Science, National Astronomical Observatory of Japan), Prof. Naoki Yoshida (Univ.of Tokyo) and Prof. Shiro Ikeda (The Institute of Statistical Mathematics) explore a deep-learning approach to reduce the noise. They develop an image-to-image translation method with conditional adversarial networks (CANs), which learn an efficient mapping from an input noisy weak lensing map to the underlying noiseless field. They train the CANs using 30000 image pairs obtained from 1000 ray-tracing simulations of weak gravitational lensing. The figures show an example of image-to-image translation by the networks. The left panel shows an input noisy lensing map, while the right is for true (noiseless) counterpart. The medium represents the reconstructed map by their networks. In this figure, a brighter (darker) region corresponds to a higher (lower) matter density. In the paper, they investigate the robustness of the deep-learning method to de-noise the lensing map and propose to use the de-noised map for cosmological analyses.

2019/08/19

Reference:

Shirasaki, M., Yoshida, N., Ikeda, S. Physical Review D, 100, 043527 [ADS] [doi]

Contact:

Masato Shirasaki [reserchmap]